The Role of Sensing Technology in Robotics

Sensing technology plays a crucial role in the function and efficiency of robots. These technologies enable robots to perceive their environment, make informed decisions, and navigate safely. Understanding the various sensors available and their applications helps buyers, smart home users, and tech enthusiasts alike to choose the right technologies for their needs.

Importance of Sensors in Robotics

Sensors are essential components in robotics as they provide vital information about the robot’s surroundings. They allow robots to gather data for various tasks such as mapping, navigation, and obstacle detection.

Here are some key reasons why sensors are important in robotics:

| Purpose | Description |

|---|---|

| Environmental Awareness | Sensors help robots detect and understand their surroundings. |

| Navigation | They enable robots to move safely and efficiently within an environment. |

| Interaction | Sensors facilitate communication between robots and users, enhancing functionality. |

| Data Collection | Robots can gather and analyze data from their environment for various applications. |

Understanding the capabilities and limitations of different sensing technologies is fundamental for optimizing robot performance. For a deeper look at how these sensors operate, explore our article on robot sensors and navigation.

Overview of Navigation Methods

Navigating through various environments requires different approaches, and robots can utilize multiple methods to achieve this. The main navigation methods include:

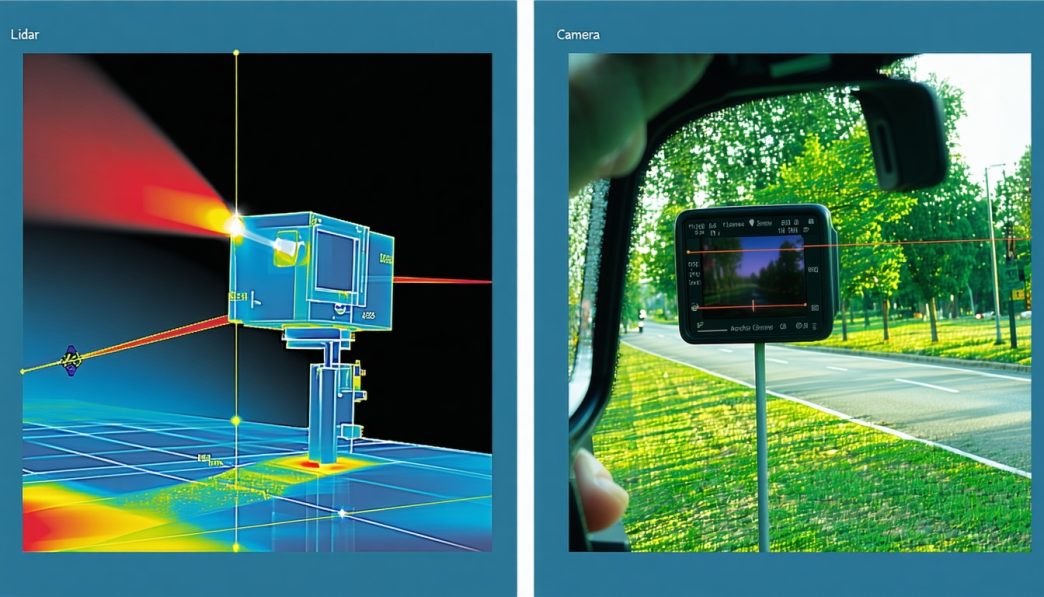

- Lidar Technology: Utilizes laser light to create 3D maps of the environment. Learn more about lidar vs camera based navigation and how it compares to other technologies.

- Camera-Based Navigation: Uses image processing to interpret the surroundings. This method enables depth perception through visual data, essential for tasks like mapping and object recognition. Detailed information can be found in our article on depth perception in robots.

- Infrared Sensors: These sensors can detect obstacles through heat signatures and are commonly used in close-range navigation scenarios.

- Ultrasound Sensors: Function similarly to sonar, measuring distance through sound waves. They are effective for short-range detection.

- Encoders: Provide feedback on wheel rotation, allowing for precise control of movement.

Each method has unique advantages and limitations, making it important for robotics developers to choose the appropriate technology based on their specific application needs. For more insight into advanced navigation techniques, visit our articles on slam mapping for robots and indoor navigation for robots as well as outdoor navigation for robots.

Understanding these navigation methods and their respective sensors can lead to better design choices and enhanced functionalities in robotic systems. As technology advances, the fusion of different sensors will continue to play a pivotal role in the future of robotic navigation. Explore how multi sensor fusion in robots improves performance and reliability in navigating complex environments.

Lidar Technology

Lidar (Light Detection and Ranging) is a crucial sensing technology used in robotics to create detailed maps of environments and facilitate navigation. This section will explore how lidar functions and evaluate its strengths and weaknesses.

How Lidar Works

Lidar operates by emitting laser pulses and measuring the time it takes for these pulses to return after bouncing off an object. By analyzing the time delay and angle of the returning light, lidar systems can construct a precise three-dimensional representation of the environment.

Lidar sensors are typically mounted on mobile robots and rotate to acquire a full view of their surroundings, capturing millions of data points per second. The collected data is then processed to generate detailed maps, allowing the robot to identify obstacles and navigate safely.

| Lidar Feature | Description |

|---|---|

| Range | Up to 200 meters |

| Accuracy | ± 2 cm |

| Data Points | Over 1 million per second |

Pros and Cons of Lidar

Lidar technology offers several advantages and disadvantages that can influence its choice in comparison to other navigation methods, such as camera-based systems.

Pros

- High Precision: Lidar provides precise distance measurements with minimal error, making it suitable for applications requiring high accuracy.

- 3D Mapping: The ability to create detailed three-dimensional maps of environments enhances a robot’s navigation capabilities.

- Effective in Low Light: Lidar can function effectively in various lighting conditions, unlike some camera-based systems.

Cons

- Cost: Lidar systems can be expensive, which may limit their accessibility for some users and applications.

- Sensitivity to Weather: Heavy rain, fog, or dust can interfere with the performance of lidar, affecting its accuracy and reliability.

- Limited Color Detection: Lidar does not capture color information, which may be necessary for certain applications, such as recognizing specific objects.

In comparison with camera-based navigation, lidar’s strengths in precision and environmental mapping make it an appealing choice for many robotic applications. However, the higher cost and weather sensitivity may lead users to evaluate all available options when seeking the best navigation solution for their robotics needs. For further insights into fusion technologies, check out our article on multi sensor fusion in robots.

Camera-Based Navigation

Camera-based navigation systems utilize visual data to help robots understand and interpret their environment. This technology is integral to enabling robots to see, map spaces, and move effectively within those spaces.

How Camera-Based Systems Work

Camera-based systems operate by capturing images of the surrounding area using one or more cameras. These images are processed using advanced algorithms that identify objects, recognize patterns, and understand spatial relationships. The system can create a visual map of the environment, which assists in navigation and obstacle avoidance.

The key components of a camera-based navigation system typically include:

- Image Capture: Cameras capture visual data at varying resolutions and frame rates.

- Processing: Specialized software analyzes the images for features such as edges, colors, and shapes.

- Decision Making: Based on the processed information, the robot determines its path and actions.

These systems can be particularly effective in well-lit environments. For more details on related navigational methods, visit our article on robot sensors and navigation.

Advantages and Limitations of Camera-Based Navigation

Camera-based navigation comes with several advantages and limitations, which can influence the choice between different navigation technologies such as lidar or camera systems.

| Advantages | Limitations |

|---|---|

| Cost-effective compared to lidar systems | Performance can degrade in low light conditions |

| High resolution allows for detailed environmental mapping | Processing demands can lead to slower response times |

| Flexible and adaptable to various environments | Susceptible to visual obstructions (e.g., fog, glare) |

| Supports additional functionalities like object recognition and classification | Limited range compared to other technologies like lidar |

The effectiveness of camera-based navigation can also depend on specific scenarios. For example, indoor environments typically benefit from visual systems due to the presence of well-defined surfaces and lighting, while outdoor environments might pose challenges. For insights on navigating indoors, check our article on indoor navigation for robots and on outdoor scenarios, visit outdoor navigation for robots.

Understanding the strengths and weaknesses of camera-based systems in comparison to other methods, such as lidar, can assist users in making informed decisions tailored to their specific robotics applications. For a more comprehensive overview, explore topics like depth perception in robots and robot obstacle detection and avoidance.

Infrared Sensors

Functionality of Infrared Sensors

Infrared (IR) sensors are essential in various robotic applications, providing crucial data for navigation and interaction with the environment. These sensors operate by emitting infrared light and measuring the reflected light from surrounding objects. They can detect obstacles, measure distances, and even sense temperature variations.

Infrared sensors can be categorized into two main types: active and passive. Active infrared sensors transmit IR light and measure the reflection, while passive infrared sensors detect infrared radiation emitted by objects based on their heat. This dual functionality allows robots to perceive their surroundings effectively.

| Sensor Type | Description | Application |

|---|---|---|

| Active IR Sensor | Emits IR light and measures reflection | Obstacle detection, distance measurement |

| Passive IR Sensor | Detects heat emitted by objects | Temperature sensing, heat detection |

Applications and Considerations

Infrared sensors are widely used in various robotic applications, from household devices to advanced autonomous systems. They are particularly effective for obstacle detection and navigation, making them suitable for both indoor and outdoor environments.

Common Applications:

-

Obstacle Detection: IR sensors play a significant role in enabling robots to identify and avoid obstacles in their path. For more information on this topic, refer to our article on robot obstacle detection and avoidance.

-

Distance Measurement: These sensors can accurately measure the distance between the robot and surrounding objects, aiding in precise navigation.

-

Temperature Sensing: Passive infrared sensors can be used to monitor heat levels, providing insights for applications like environmental sensing or robotic health checks.

Considerations:

While infrared sensors offer a range of benefits, it is essential to acknowledge some limitations. They can be affected by ambient light conditions, which may interfere with their readings. Additionally, surface characteristics of objects can influence the reflectivity of IR light, resulting in variable distance measurements.

Combining infrared sensors with other technologies, such as lidar or camera-based systems, can enhance performance and reliability. This sensor fusion approach is discussed in detail in our article on multi sensor fusion in robots. For further insights on navigation methods, consider our articles on indoor navigation for robots and outdoor navigation for robots.

In summary, infrared sensors are a vital component in robotic navigation and perception, offering diverse applications while presenting some challenges that can be mitigated through sensor integration strategies.

Ultrasound Sensors

Operating Principles of Ultrasound Sensors

Ultrasound sensors operate by emitting high-frequency sound waves that are beyond the range of human hearing. These sensors send out a pulse of sound and measure the time it takes for the echo to return after bouncing off an object. By calculating the time taken for the sound wave to travel to the object and back, the sensor can determine the distance to that object.

The basic formula used for calculating distance with ultrasound sensors is as follows:

[ \text{Distance} = \frac{\text{Speed of Sound} \times \text{Time}}{2} ]

The speed of sound in air is approximately 343 meters per second at room temperature. This technology is commonly used in various applications due to its ability to detect objects in proximity accurately.

| Distance Measured (m) | Time Taken for Echo (ms) |

|---|---|

| 0.5 | 3.0 |

| 1.0 | 6.0 |

| 2.0 | 12.0 |

| 3.0 | 18.0 |

Use Cases and Benefits

Ultrasound sensors are versatile and find applications in many areas of robotics and automation. Some of the most common uses include:

-

Obstacle Detection: Robots equipped with ultrasound sensors can navigate complex environments by detecting obstacles in their path. This helps enhance their ability to avoid collisions, making them safer in various settings, including homes and public spaces. For more on this topic, visit our article on robot obstacle detection and avoidance.

-

Distance Measurement: These sensors are effective for measuring distances in applications such as drones, automated guided vehicles, and robot vacuums. Accurate distance measurement is essential for effective navigation.

-

Indoor Navigation: Ultrasound can assist in indoor navigation scenarios where GPS signals are weak or unavailable. The technology can provide reliable positioning information in confined spaces. For further insights, see our article on indoor navigation for robots.

-

Object Tracking: Robots utilize ultrasound sensors to track moving objects, which is crucial in fields like logistics, where robots need to identify and follow parcels or goods.

-

Liquid Level Measurement: Ultrasound sensors are often used in industrial applications to measure liquid levels in tanks and reservoirs, ensuring efficient resource management.

The benefits of ultrasound sensors include cost-effectiveness, simplicity in integration, and the ability to function well in various environments. However, they do have limitations in outdoor navigation due to factors such as wind, temperature variations, and background noise. For a comparative analysis of various navigation technologies, including lidar vs camera based navigation, refer to our relevant articles that discuss different sensing technologies used in robotics.

Encoders

Understanding Encoder Technology

Encoders play a crucial role in robotic systems, serving as devices that convert rotational or linear motion into a digital signal. This information is vital for understanding position and movement, enabling robots to navigate their environments effectively. There are two primary types of encoders: incremental and absolute.

-

Incremental Encoders: These encoders track movement by counting pulses as the shaft rotates. They provide relative position information but need a reference point after power loss to determine the absolute position.

-

Absolute Encoders: These devices provide a unique position value at every shaft angle, allowing robots to know their exact location even without moving back to a zero point.

The choice between incremental and absolute encoders depends on the specific application and required precision.

| Encoder Type | Key Features | Applications |

|---|---|---|

| Incremental | Counts pulses; relative position | Robotics, automation systems |

| Absolute | Unique value for every position | Robotics, CNC machinery |

Contribution to Navigation in Robotics

Encoders significantly enhance the navigation capabilities of robots, particularly in applications involving movement and positioning. They work by providing real-time feedback on a robot’s speed, direction, and distance traveled. This information is essential for implementing accurate movement control and positioning strategies.

Key Contributions of Encoders in Robotics Navigation:

-

Precision in Movement: Encoders enable robots to make precise movements. This is critical for tasks such as delivery and mapping rooms in smart home environments.

-

Feedback for Control Algorithms: The data from encoders is used in control algorithms to adjust the robot’s actions, ensuring that it follows a predetermined path.

-

Integration with Other Sensors: Encoders often work in tandem with other sensing technologies, such as Lidar or camera systems, to improve navigation strategies. The fusion of these technologies helps create a comprehensive map of the environment, enhancing robot performance. For more on how these systems work together, refer to our article on multi sensor fusion in robots.

-

Assistance in Localization: By combining encoder readings with data from other sensors, robots can accurately localize themselves within their environment. This is especially important in applications like indoor navigation for robots and outdoor navigation for robots.

| Contribution | Benefit |

|---|---|

| Precision in Movement | Enables accurate navigation |

| Feedback for Control | Adjusts actions dynamically |

| Integration with Sensors | Increases overall system efficiency |

| Assistance in Localization | Enhances positioning accuracy |

The integration of encoder technology ensures that robots can navigate effectively while adapting to their surroundings. Understanding these systems is pivotal for those interested in the nuances of robotic sensors and navigation, especially in comparing technologies like lidar vs camera based navigation. Detecting and avoiding obstacles is also critical, which is explored in our article on robot obstacle detection and avoidance.

Fusion of Sensing Technologies

In the field of robotics, combining multiple sensors is becoming an essential approach for enhancing performance. This fusion of sensing technologies allows robots to navigate complex environments more effectively, leveraging the strengths of each sensor type while minimizing their individual limitations.

Combining Multiple Sensors for Enhanced Performance

Sensor fusion involves integrating data from various types of sensors to create a more comprehensive understanding of the robot’s surroundings. This approach can improve accuracy, reliability, and efficiency in navigation tasks. By using multiple sensors such as Lidar, cameras, infrared, and ultrasound, robots can gather a broader range of information.

The table below highlights the strengths and weaknesses of various sensors when integrated for optimal navigation.

| Sensor Type | Strengths | Weaknesses |

|---|---|---|

| Lidar | Precise distance measurements, works in various lighting conditions | Costly, can struggle in reflective environments |

| Camera | High-resolution images, can recognize objects and features | Limited in low light, susceptible to occlusions |

| Infrared Sensors | Good for proximity sensing, measures heat | Limited range, affected by interference such as sunlight |

| Ultrasound Sensors | Effective for short-range detection, low cost | Limited in complex environments, lower resolution compared to optical sensors |

By blending these sensor types, robots can benefit from improved depth perception and mapping capabilities. For more insights into depth perception, refer to our article on depth perception in robots.

Examples of Sensor Fusion in Robotics

Various robotic applications have successfully employed sensor fusion for enhanced navigation and operational capabilities.

-

Autonomous Delivery Bots: These robots often utilize a combination of Lidar and camera systems to navigate urban environments. Lidar provides accurate distance measurements, while cameras help with identifying obstacles and ensuring safe movement.

-

Robotic Vacuum Cleaners: Many models employ sensor fusion by combining infrared sensors for detecting nearby objects and cameras for mapping the environment. This allows them to efficiently clean floors without missing spots or hitting obstacles.

-

Humanoid Robots: In humanoid robots, sensor fusion is critical for achieving balance and navigating through environments. Sensors such as gyroscopes, encoders, and cameras work together to enhance movement coordination and interaction.

-

SLAM Technology: Simultaneous Localization and Mapping (SLAM) techniques greatly benefit from sensor fusion. By integrating data from various sensors, robots can build real-time maps of unknown environments while tracking their position. For more on SLAM, visit our article on slam mapping for robots.

The combination of diverse sensing technologies enables robots to perform complex tasks with increased efficiency and safety, enhancing their usefulness in both home and industrial settings. Additionally, for readers interested in the future of robotic navigation, consider exploring our insights on the future of robotic navigation.